Progress on Messaging, I Think I Got It

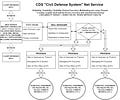

What really makes my system powerful is automatic universal message passing

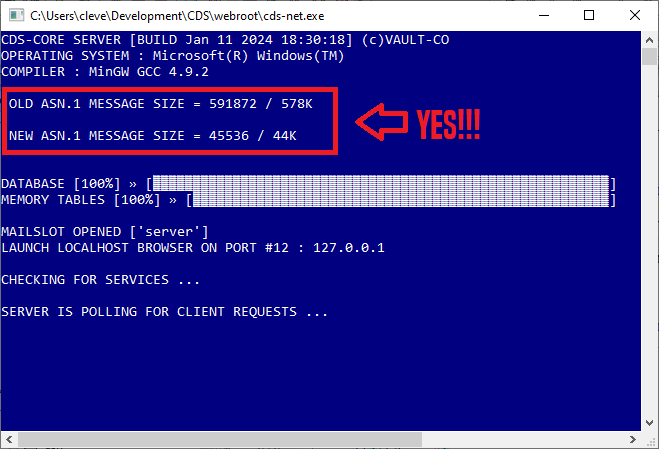

I actually managed to work three days uninterrupted without getting distracted this week. I went in and tore up all the ASN.1 messaging code and redesigned the format to fit memory restricted computers.

As people will see shortly, my system is quite magical when everything is working. There are all sorts of processes and agents running together coordinating all kinds of message passing on the network, in internal memory via IPC (mailslots on all platforms) and heterogeneous systems with plug-n-play capacity working seamlessly to make even a modest machine seem like a supercomputer. Just the modules I have are spectacular but if the system is written correctly it will have almost unlimited potential to hook up to other systems and control them through a web browser interface used for general SCADA and other functionality.

The messaging is really the core of the entire system. Even passing interfaces themselves around as messages will be implemented later on.

I started with the assumption that whatever the message, I had to keep it under 64K, because 600K for some messages is ridiculous. No message system can chuck 600K around over UDP, websockets or IPC without soon choking and clogging up. I could go into a deep explanation of the rationale for avoiding all memory allocation after startup but I copy the policy of the European Space Agency … there is no room for buffer overflows in space. Such events can leave the hardware in an indeterminate state and it might crash at any moment. Buffer overflows cause memory corruption and in space there is no need for blue screens telling you to reboot your machine. To avoid these problem, all buffers have to be big enough (1-N * SIZE) to handle any possible ingoing or outgoing message.

If you are serious about writing a truly hardened system, like a satellite application or a fallout shelter computer that could run unattended forever (BETA BATTERIES are finally here! I’ve been waiting on them for decades with this SYSTEM in mind!!!) then you have to observe a few important rules :

No threads. Threads are indeterminate and sooner or later they crash. Pseudo-intellectuals who don’t code much love them. They are anathema to hardened embedded devices and systems built to be tough and never crash. Use coprocessing or state based processing and polling.

No dynamic memory allocation at run-time. Start slinging around memory both allocating and releasing it a thousand times a second and sooner or later you’re going to make an error and corrupt memory, run out of memory due to least-squares failures or just write crappy code that forgets to release after allocating somewhere.

No locks or synchronization code on any resources. This leads to bottlenecks and race conditions even in coprocessing. All resources should be singletons and universally accessible by any part of the program at any time.

So I studied the TASTE manual for the ASN.1 European Space Agency compiler and discovered that nearly any message can have a single byte indicating it is a standalone unit or part of a series for declaring continuance and structured my code accordingly. Even the most gigantic messages can be sent and received in 64K blocks that will fit into a mailslot buffer or a UDP frame, using the notion of continuance signaling.

I.E. You can describe up to 8 properties of a device in a Manifest message, which is sufficient for 90% of modules you might want to control. What if the device has 64 or 128 properties? You can send the same message with a segment value to simply append to the descriptor. Theoretically, if it’s a nuclear power plant you are controlling and you want to know the temperature of 400 rods in the reactor, you could receive and process this data even on memory restricted devices with continuance segments.

Here’s where I am as of tonight.

A single structure can hold any of 42 incoming messages in binary and the largest message in the union field is 44K, very manageable. Doable even under real mode DOS possibly if I ever compile it again for that platform.

I won’t bore you with the details of the production chain but it’s basically edit the abstract message global descriptor file in text, run the batch file and regenerate the ANSI C Encoder/Decoder with one keypress. Then recompile. This really helps when you suddenly realize you could use an extra data field here or there and don’t have an entire day to handcode the changes yourself. Really speeds things along and ups the iteration time for code-compile-test.

This is a very positive development here because I’ve been rocking with madness over just finishing the message format here for months. I know it sounds like I am a drama queen but I saw a flaw in the initial design making major changes that would flow on to further changes and work for years in things that should have been designed to be much easier.

Encode-Decode of messages is so fast it is nearly as quick as a memory copy. The JSON messages I used ten years ago look like stone age tech compared to lightning fast ASN.1 binary whooshing about. It’s all binary which means encryption can be introduced anytime if desired later on. It also travels much faster around in the process itself using IPC (Inter-Process Communication) which fully implements plug-n-play for new modules to hook up and register by simply running them! After that they can be configured to auto-boot with the server at startup!

This startup screen is just the first console to come up, I have the 90% of critical functionality in database management, reporting and configuration through the web browser working going back years. I couldn’t get onto polishing that until I got these messages right.

I’ve only ever used the capacity for Remote Procedure Calls (RPCs) for one function - to tell a machine to perform some self-diagnosis. That ability like on any platform is wide open to all kinds of expansion. I will probably amend that quite a bit once I get all the regular stuff working. I based a lot of that on D-BUS format which is quite popular in big industrial SCADA and like BACNET there may someday be a message bridge component for the protocol. All metrics and property descriptors in CDS are borrowed heavily from BACNET standards.

Many of these protocols and message standards have grown increasingly complex and drifted away from their humble beginnings. I have pursued a coding strategy of sheer village idiot simplicity in my design and where I thought things were getting too pretentious I have trimmed them back to “DoStuffRight();” calls wherever possible. Our goal is not to impress with sophistication but to write an application so solid it runs forever as reliable as dusk and dawn.

Regards, Tex

I understood MOST of what you typed...heh heh. As a 35 year IT veteran, I can assure other readers that this architecture appears to be VERY VERY sound. That should give you confidence that this thing will run, and run, and run. Great job!

Not sure I completely with you on the 'threads' knock down. I think a lot depends on the particular OS in use, and exactly how well (or not) you manage your queue handler. Also, like you say, the peeps writing the code. I've worked in radio, military apps, embedded systems / hardware and commercial banking - banking by far was the most demanding for message/queue transaction routing. (High volume credit card processing) That was 1997/8 - all written in C, but no way you were doing ~50K transactions per hour on one thread (Used Stratus/VOS). Servers ran for years unattended in Amex's / Phoenix black-out building.

.

Now semi-retired, but I have one app still in production - an AVL system that the local Sheriff's Office uses. I'd never have completed it if I'd written it in C, ha. The REST API and the Azure Function receiver app has been up since 2019 and handles several hundred location events per minute, sent by cars with embedded devices running a Python app. All server side and client side code written in C#.

.

No restarts. No crashes. Just whatever M$ does with their servers from time to time which I have no control over.

.

Curious as to your particular application - 50+ years runtime?? That is longer than I'll be alive, ha.